Cassini has been in the news lately: Wired.com recently featured a story on Cassini and I was interviewed by eCommerceBytes. Given the interest in the topic, this week’s post is more on the inside Cassini story.

The Beginning

At the end of 2010, we decided that we needed a new search platform. We’ve done great work in improving the existing Voyager search engine, and it had contributed substantially to the turnaround of eBay’s Marketplaces business. But it wasn’t a platform for the future: we needed a new, modern platform to innovate for our customers and business.

We agreed as a team to build Cassini, our new search engine platform. We started Cassini in around October 2010, with a team of a few folks lead by industry veteran Nick Whyte.

Voyager

Our Voyager search engine was built in around 2002. It’s delivered impressively and reliably for over ten years. It’s a good old workhorse. But it isn’t up for what we need today: it was architected before many of the modern advances in how search works, having launched before Microsoft began its search effort and before Google’s current generation of search engine.

We’ve innovated on top of Voyager, and John Donahoe has discussed how our work on search has been important. But it hasn’t been easy and we haven’t been able to do everything we’ve wanted – and our teams want a new platform that allows us to innovate more.

Cassini

Since Voyager was named after the late 1970s space probes, we named our new search engine after the 1996 Cassini probe. It’s a symbolic way of saying it’s still a search engine, but a more modern one.

In 2010, we made many fundamental decisions about the architecture and design principles of Cassini. In particular, we decided that:

- It’d support searching over vastly more text; by default, Voyager lets users search over the title of our items and not the description. We decided that Cassini would allow search over the entire document

- We’d build a data center automation suite to make deployment of the Cassini software much easier than deploying Voyager; we also built a vision around provisioning machines, fault remediation, and more

- We decided to support sophisticated, modern approaches to search ranking, including being able to process a query in multiple rounds of ranking (where the first iteration was fast and approximate, and latter rounds were intensive and accurate)

Cassini is a true start-from-scratch rewrite of eBay’s search engine. Because of the unique nature of eBay’s search problem, we couldn’t use an existing solution. We also made a clean break from Voyager – we wanted to build a new, modular, layered solution.

Cassini in 2011

January 2011 marked the real beginning of the project. We’d been working for three or four months as a team of five or so, and we’d sketched out some of the key components. In 2011, we set the project in serious motion – adding more folks to the core team to begin to build key functionality, and embarking on the work needed to automate search. We also began to talk about the project externally.

Cassini in 2012

In 2012, we’d grown the team substantially from the handful of folks who began the project at the end of 2010. We’d also made substantial progress by June – Cassini was being used behind-the-scenes for a couple of minor features, and we’d been demoing it internally.

In the second half of 2012, we began to roll out Cassini for customer-facing scenarios. Our goal was to add value for our customers, but also to harden the system and understand how it performs when it’s operating at scale. This gave us practice in operating the system, and forced us to build many of the pieces that are needed to monitor and operate the system.

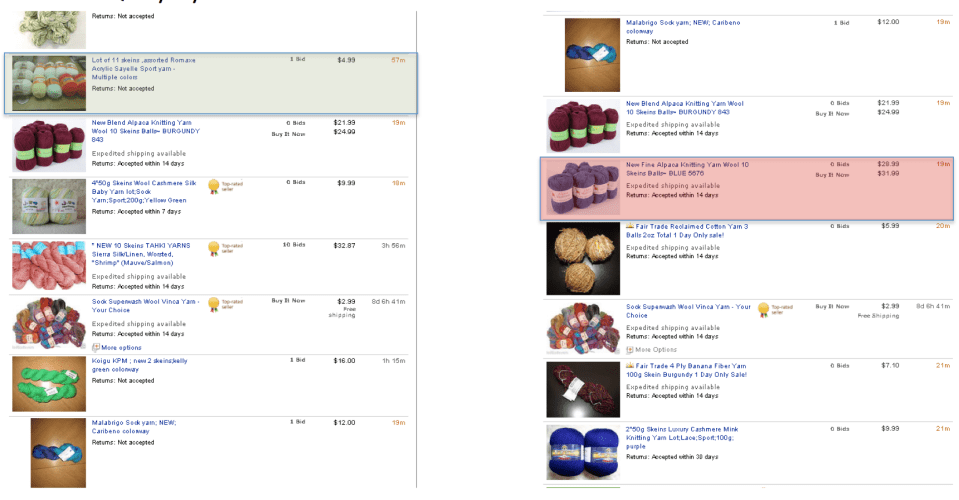

The two scenarios that we rolled out Cassini for in 2012 were Completed Search worldwide, and null and low search in North America. Mark Carges talked about this recently at eBay’s 2013 Analysts’ Day.

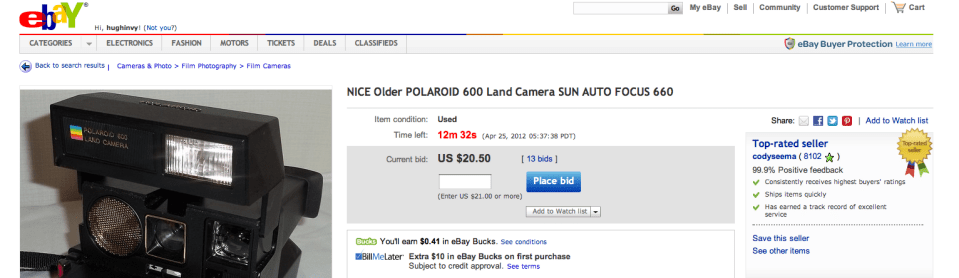

Completed search is the feature that allows our customers to search listings that have ended, sold or not; it’s a key way that our customers do price research, whether for pricing an item they’re selling or to figure out what to pay when they’re buying. Because Cassini is a more scalable technology, we were able to provide search over 90 days of items rather than the 14 days that Voyager has always offered.

When users get no results from a query (or very few), Voyager offered a very basic solution – no results and a few suggested queries that you might try (this is still what you’ll see on, for example, the Australian site today). Cassini could do much more – it could help us try related queries and search more data to find related matches. After customer testing, we rolled out Cassini in North America, the UK, and Germany to support this scenario – a feature that’s been really well received.

Cassini in May 2013

In January 2013, we began testing Cassini for the default search with around 5% of US customers. As I’ve discussed previously, when we make platform changes, we like to aim for parity for the customers so that it’s a seamless transition. That’s our goal in testing – replace Voyager with a better platform, but offer equivalent results for queries. The testing has been going on with 5% of our US customers almost continuously this year. Parity isn’t easy: it’s a different platform, and our Search Science and Engineering teams have done great work to get us there.

We’ve recently launched Cassini in the US market. If you’re querying on ebay.com, you’re using Cassini. It’s been a smooth launch – our hardening of the platform in 2012, and our extensive testing in 2013 have set us on a good path. Cassini doesn’t yet do anything much different to Voyager: for example, it isn’t by default searching over descriptions yet.

Cassini in 2013 and beyond

We’ll begin working on making Cassini the default search engine in other major markets later in the year. We’re beginning to test it in the UK, and we’ll work on Germany soon too. There’s also a ton of logistics to cover in launching the engine: cassini requires thousands of computers in several data centers.

We’ll also begin to innovate even faster in making search better. Now that we’ve got our new platform, our search science team can begin trying new features and work even faster to deliver better results for our customers. As a team, we’ll blog more about those changes.

We have much more work to do in replacing Voyager. Our users run more than 250+ million queries each day, but many more queries come from internal sources such as our selling tools, on site merchandizing, and email generation tools. We need to add features to Cassini to support those scenarios, and move them over from Voyager.

It’ll be a while before Voyager isn’t needed to support a scenario somewhere in eBay. We’ll then turn Voyager off, celebrate a little, and be entirely powered by Cassini.

See you next week.