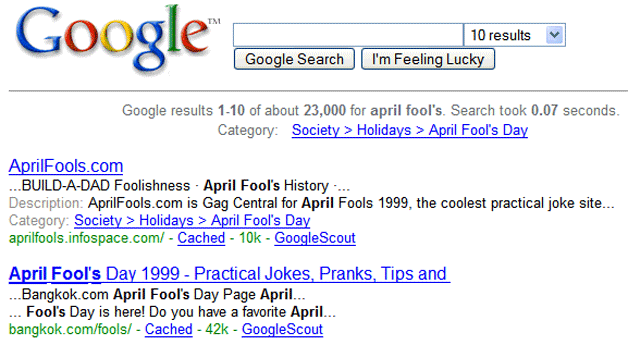

Remember when Google launched into our consciousness? Below you’ll see what you probably saw back in 1999: a clean, simple results page with ten blue links.

Google search results, circa 1999

Google was a marked contrast to the other search engines of the era: AltaVista, Excite, Lycos, Looksmart, Inktomi, Yahoo!, Northern Light, and others.

What was different about Google? Most people talked about three things:

- PageRank, the use of the web’s link structure in search ranking. The perception was that Google found more relevant results than its competitors. Here’s the original paper

- Its clean page design. It wasn’t a link farm, there was plenty of white space, and an absence of advertising (banner or otherwise) for the first couple of years

- Its speed and its collection size. Google felt faster, reported fast search times, claimed a large index (remember when they used to say how many documents they had on the home page?), and showed large result set counts for your queries (result set estimation is a fun game – I should write a blog post about it)

What struck me most was the way Google presented result summaries on the search results page. Google’s result summaries were contextual: they were constructed of fragments of the documents that matched the query, with the query words highlighted. This was a huge step forward from what the others were doing: they were mostly just showing the first hundred or more characters of the document (which often was a mess of HTML, JavaScript, and other rubbish). With Google, you could suddenly tell if the result was relevant to you — and it made Google’s results page look incredibly relevant compared to its competitors.

And that’s what this blog post is about: contextual result summaries in search, snippets.

Snippets

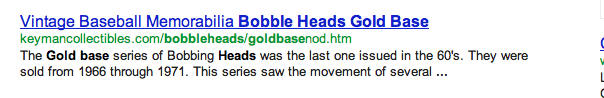

Snippets are the summaries on the search results page. There’s typically ten of them per results page. Take a look at the result below from the Google search engine, it’s one of the ten snippets for the query gold base bobblehead.

A Google Snippet

The snippet is designed to help you make a decision: is this relevant or not to my information need? It helps you decide whether or not to invest a click. It’s important — it’s key to our impression of whether or not a search engine works well. The snippets are the bulk of the results page, they’re displayed below the brand of the search engine, and together they represent what the search engine found in response to your query. If they’re irrelevant, your impression of the search engine is negative; and that’s regardless of whether or not the underlying pages are relevant or not.

The snippet has three basic components:

- The title of the web document, usually what’s in the HTML <title> tag. In this case, “Vintage Baseball Memorabilia Bobble Heads Gold Base“

- A representation of the URL of the web resource, which you can click on to visit the document on the web. In this case, “keymancollectibles.com/bobbleheads/goldbasenod.htm”

- (Usually) a query biased summary. One, two, or three fragments extracted from the document that are contextually relevant to the query. In this case, “The Gold base series of Bobbing Heads was the last one issued in the 60’s. They were sold from 1966 through 1971. This series saw the movement of several …”

What’s happening in the world of snippets

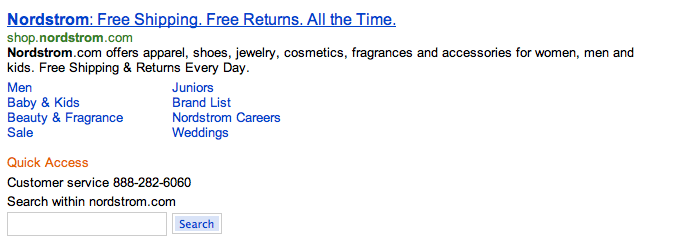

All search engines show quicklinks or deeplinks for navigational queries. Navigational queries are those that are posed by users when they want to visit a specific location on the web, such as Google, Microsoft, or eBay. Take a look at the snippet below from Bing for the query nordstrom. What you can see below the snippet are links that lead to common pages on the nordstrom.com site including “Men”, “Baby & Kids”, and so on; these are what’s known in the industry as quicklinks or deeplinks. When I was managing the snippets engineering team at Bing, we worked on enhancing snippets for navigational queries to show phone numbers, related sites, and other relevant data. The example for the query nordstrom shows the phone number and a search box for searching only the nordstrom.com site.

Nordstrom navigational query snippet from Bing

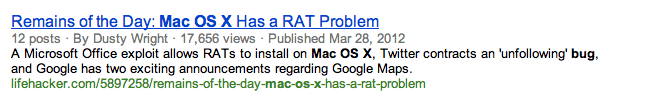

At Bing, we also invented customizing snippets for particular sites or classes of sites — for example, below is a snippet that’s customized for a forum site. You can see that it highlights the number of posts, author, view count, and publication date separately from the query biased summary.

Custom snippet for a forum site from Bing

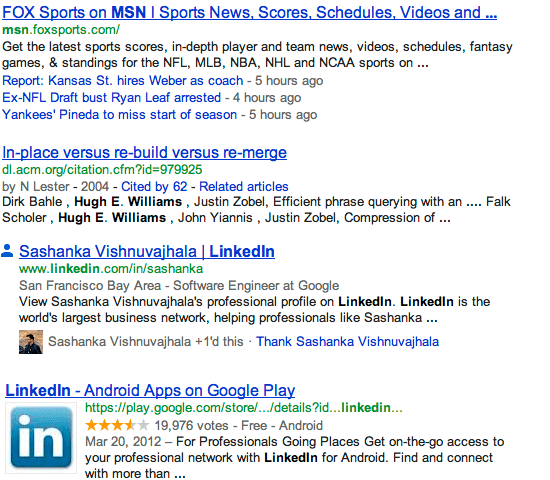

While Bing pioneered it, Google has certainly got in on the game — I’ve included below a few different Google examples for snippets from LinkedIn, Google Play, Google Scholar, and Fox Sports.

Custom Google snippets for LinkedIn, Google Scholar, Google Play, and Fox Sports

What’s all this innovation for? It’s to help users make better decisions about whether or not to click, and to occasionally give extra links to click on. For the Fox Sports snippet, it’s showing the top three headlines from the site — and that’s perhaps what the user wants when they query for Fox Sports (they don’t really want to go to the home page, they want to read a top story). For the Google Play and Scholar snippets, there’s information in the snippet that informs us whether the results is authoritative: has it been cited by other papers many times? Has it been voted on many times? The LinkedIn result is extracting key information and presenting it separately: the city that the person live in and who they work for, and it’s also including some data from Google+.

Query-Biased Summaries

Google didn’t invent query-biased summaries, Tassos Tombros and Mark Sanderson invented them in this 1998 paper.

The basic method for producing query-biased summary goes like this:

- Process the user’s query using the search index, and get a list of web resources that match (typically ten of them, and typically web [HTML] pages)

- For each document that matches:

- Find the location of the document in the collection

- Seek and read the document into memory

- Process the document sequentially and:

- Extract all fragments that contain one or more query words (or related terms — you get these from the query rewriting service)

- Assign a score to each fragment that approximates its likely relevance to the query

- Find the best few fragments based on the best few scores (one, two, or three)

- Neaten up the best fragments so they make sense (try and make them begin and end nicely on sentence boundaries or other sensible punctuation)

- Stitch the fragments together into one string of text, and save this somewhere

- Show the query-biased summaries to the user

- Don’t process the entire document. In general, the fragments that are the best summaries are nearer the start of the documents, and so there’s diminishing returns in processing the entirety of large documents. It’s also possible to reorganize the document, by selecting fragments that are very likely to appear in snippets, and putting them together at the start of the document

- Cache the popular results. Save the query-biased summary after it’s computed, and reuse it when the same query and document pair is requested again

- Cache documents in memory. It’s been shown that caching just 1% of the documents in memory saves more than 75% of the disk seeks (which turn out to be a major bottleneck)

- Compress the documents so they’re fast to retrieve from disk. Better still, compress the query words, and compare them directly to the compressed document. This is the subject of a paper I wrote with Dave Hawking, Andrew Turpin, and Yohannes Tsegay a few years ago. We showed that this makes producing snippets around twice as fast as without compression

Most search folks don’t think about the ranking techniques that are used in the snippet generator. The big ranking teams at major search companies focus on matching queries to documents. But the snippet generator does do interesting ranking: its task is to figure out the best fragments in the document that should be shown to the user, from all of the matching fragments in the document. Tombros and Sanderson discuss this briefly: they scored sentences using the square of the number of query words in the sentence divided by the number of query words in the query. Net, the more query words in the fragment, the better. I’d recommend adding a factor that gives more weight to fragments that are closer to the beginning of the document. There are also other things to consider: does the fragment make sense (is it a complete sentence)? Which of the query words are most important, and are those in the fragment? Do the set of fragments we’re showing cover the set of query terms? You could even consider how the set of query-biased summaries give an overall summary of the topic. I’m sure there’s lots of interesting ideas to try here.

Snippets have always fascinated me, and I believe their role in search is critical to users, unsung, and important to building a great search engine.

Hope you enjoyed this post — if you did, please share it with your friends through your favorite social network. There’ll be a new post next Monday…